Announcing "Food Announcer", a food delivery robot

Last month our company had its yearly Hackathon.

The hardest part of any Hackathon is, of course, to choose what project to take on.

I knew I wanted to break out of my comfy web development bubble and mess around with hardware.

A coworker suggested I set out to find a solution to one of the major hardships of office-life: Unclaimed food delivery bags on the front-desk.

Basically you can be starving by your desk and not know that the lunch you ordered arrived 20 minutes ago is just waiting for you to come get it.

The concept was to somehow identify the owner of the bags placed at the front desk and notify him\her by email.

Luckily two other very talented devs thought this was cool, and after two days we created Food Announcer. Check out this video to see it in action:

How Food Announcer works

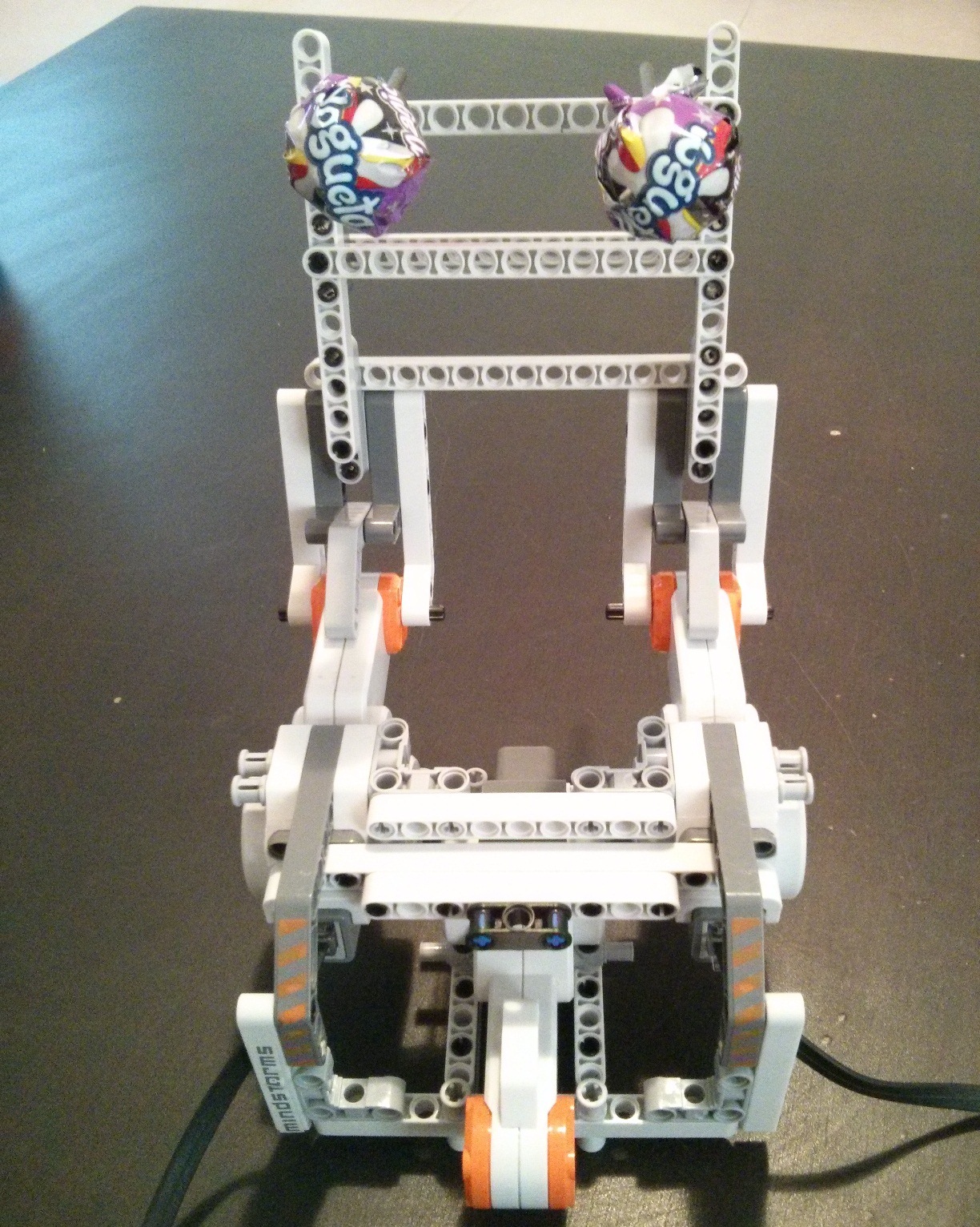

- The delivery guy places the bag in the dedicated box. There's an indication which direction the label should be facing.

- A proximity sensor identifies that a bag is present.

- A camera takes a photo of the bag and label.

- We use an open-source OCR library called "Tesseract" to convert the image into text.

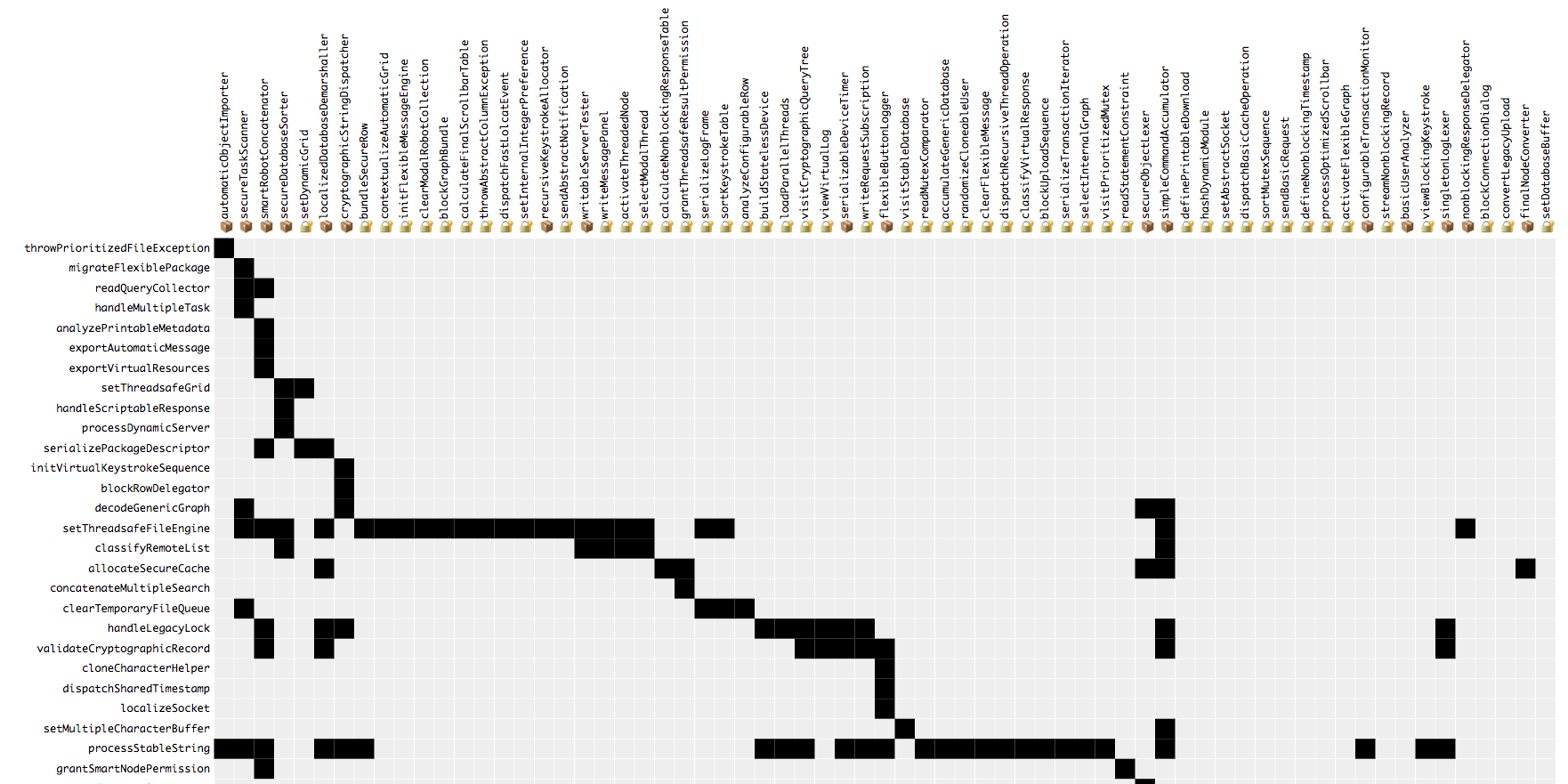

- We check if the converted text contains one of 122,000 variations on the names of the company employees. This helps us find a match even if the OCR library added, deleted, or substituted one of the letters in the person's name.

- A joyous email is sent to the hungry patron.

- Throughout the process we use text-to-speech to let the delivery guy know what's going on and whether the match was successful.

Food Announcer does one more thing. Prior to getting started we noticed that a few of the delivery guys that come to our office help themselves to the candy that's on the front desk. We concluded that all food delivery professionals have a weakness for candy.

To motivate them to place the latest bag in the box with the label facing the right way, after a match is found the robot raises its mechanical arm and offers a lollipop.

The hardware we used

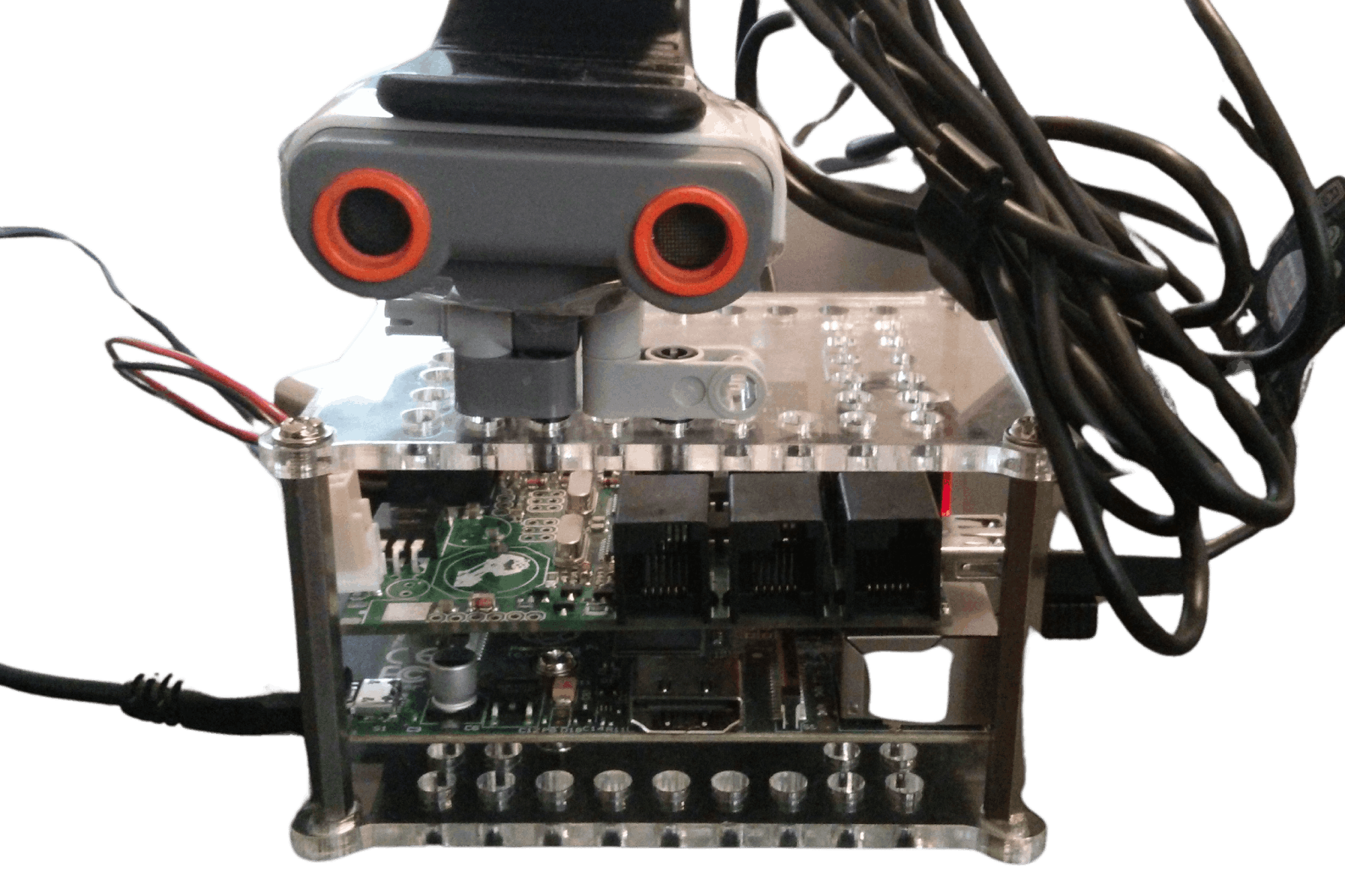

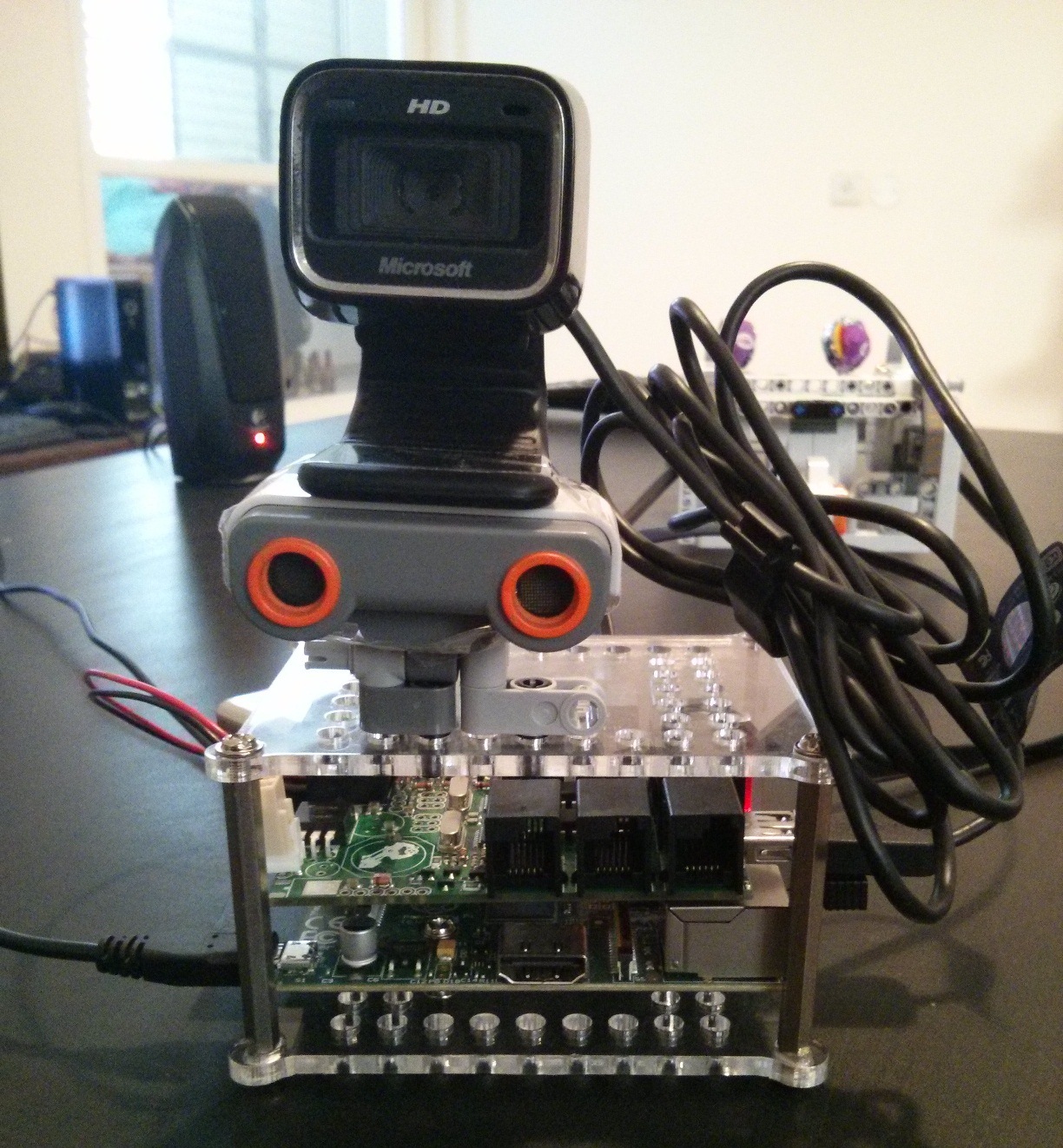

- Raspberry Pi- Like many others I purchased this tiny computer and have been looking for more cool uses for it.

- Lego Mindstorms NXT- Robotics kit which includes sensors, motors, and Lego Technic pieces.

- BrickPi- A product of a successful Kickstarter campaign. A board that connects the Mindstorms sensors and motors to the Raspberry Pi.

- Microsoft LifeCam HD-5000 webcam.

Lessons learned

Use transformations where OCR falls short

We were very happy to find Tesseract when we searched for free OCR solutions on the web. It was very easy to get started with. However with our input we never got perfect results. It had difficulties with some characters in particular, and would substitute them with visually similar characters.

As mentioned above, we solved this by calculating a list of transformations on the names of the employees once, and matching the recognized text against that list.

Alternatively, we could have provided Tesseract with the names of the employees as the dictionary of the custom language it was matching against.

Aggregate proximity sensor readings

The proximity sensor generated a noisy signal which meant that it would sometimes report a nearby object even though it's not there. We overcome this by using a moving average window on the readings.

We also added a short delay between detection and taking the photo, in case the bag is coming from above.

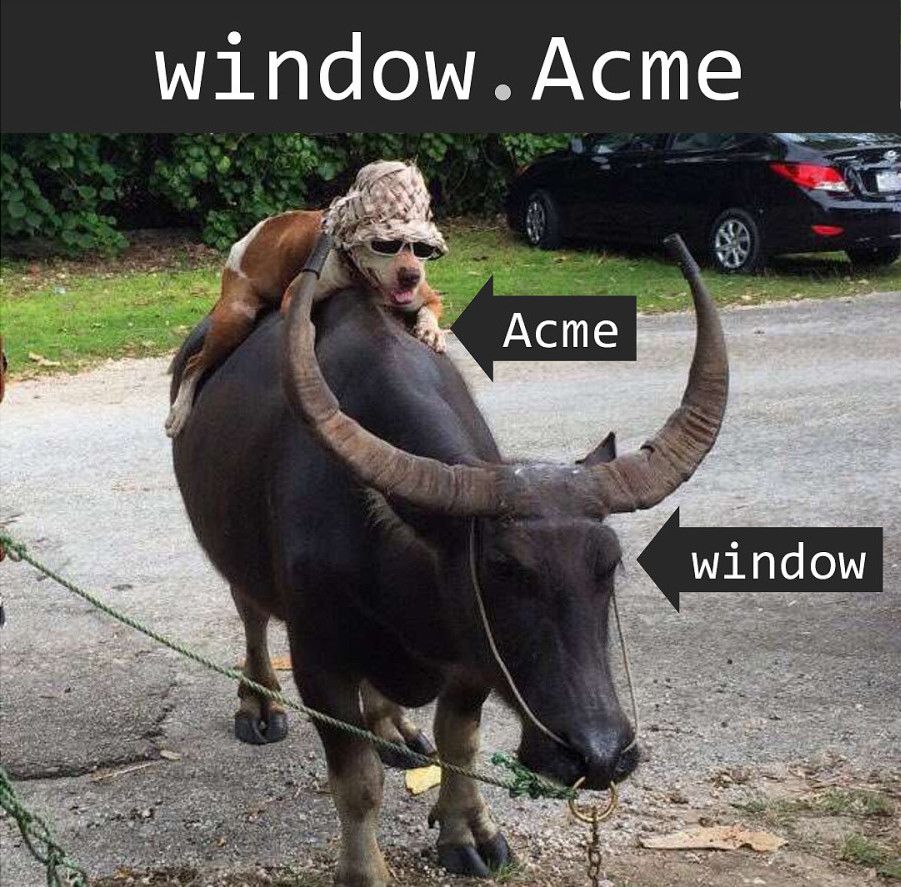

Perform heavy calculations off the Raspberry Pi

Initially the OCR, string matching, and sending the email was done on the Pi. Instead we set up a web server on a dev machine and sent requests to it from the Pi after a picture was taken. By performing this analysis on a dev machine and not on the Pi's ARM processor and limited memory, the process takes a few seconds and not minutes.

Disable autofocus

By default the webcam it set to use autofocus. In theory this should be helpful when the bag is placed at different distances from the camera. But in practice we found that it gets the focus wrong most of the time. By setting the focus to a set value and forcing the user to place the bag in a set distance from the camera (using the box) we were able to get clearer pictures.

Bon Appetit!